Login

Login

Winner's Excogitations

A chronicle of the thoughts, learning experiences, ideas and actions of a tech junkie, .NET, JS and Mobile dev, aspiring entrepreneur, devout Christian and travel enthusiast.

Essential NuGet Packages for .NET Development: Boost Your Productivity and Efficiency

3 years ago · 3 minutes read

As a .NET developer, you have access to a vast ecosystem of open-source libraries and frameworks that can help you accelerate your development process, enhance the functionality of your applications, and streamline your workflow. One of the key tools in the .NET ecosystem is NuGet, a package manager that allows you to easily discover, install, and manage third-party libraries in your .NET projects. In this blog post, we will explore some of the popular NuGet packages for .NET development that can greatly improve your productivity and efficiency.

Newtonsoft.Json Json.NET, also known as Newtonsoft.Json, is a widely used and versatile JSON (JavaScript Object Notation) library for .NET. It provides powerful JSON processing capabilities, including serializing .NET objects to JSON and deserializing JSON to .NET objects, as well as manipulating and querying JSON data. Json.NET supports a variety of JSON standards and has extensive features, such as support for LINQ-to-JSON, custom converters, and schema validation. It is widely used in many .NET applications for handling JSON data and has become the de facto standard for JSON serialization and deserialization in the .NET ecosystem.

EntityFramework Core Entity Framework Core (EF Core) is a modern, lightweight, and cross-platform object-relational mapping (ORM) framework for .NET that enables developers to work with databases using .NET objects. EF Core is a complete rewrite of the original Entity Framework (EF) and is designed to be more modular, performant, and compatible with different database providers. EF Core provides a higher-level abstraction for interacting with databases, allowing you to model your database schema using .NET classes and work with data using familiar object-oriented programming (OOP) concepts. EF Core supports various database providers, such as SQL Server, MySQL, PostgreSQL, and SQLite, and offers features such as automatic code generation, caching, and query optimization. EF Core simplifies database access and enables you to write more maintainable and scalable data access code in your .NET applications.

AutoMapper Automapper is a popular object-object mapping library for .NET that simplifies the process of mapping one object to another. It allows you to define mapping configurations between objects with different structures and automatically maps properties based on conventions or explicit mappings. AutoMapper eliminates the need for writing repetitive and error-prone mapping code, making object mapping in .NET applications more efficient and readable. It also supports advanced mapping scenarios, such as flattening, nesting, and customization, and can greatly reduce the amount of boilerplate code required for object mapping tasks.

Serilog Serilog is a flexible and extensible logging library for .NET that provides a powerful and expressive way to log events and messages in your applications. It supports various log sinks, such as console, file, database, and third-party services, and allows you to configure logging behavior using a fluent and code-first configuration API. Serilog also supports advanced features, such as structured logging, event filtering, and custom log message templates, making it a powerful tool for logging and diagnostics in .NET applications. With its rich ecosystem of extensions and integrations, Serilog is widely used in many .NET applications for logging and tracing purposes.

Microsoft.Extensions.DependencyInjection Microsoft.Extensions.DependencyInjection is a lightweight and extensible dependency injection (DI) container for .NET that provides a simple and flexible way to manage object dependencies in your applications. DI is a design pattern that promotes loosely coupled and testable code by allowing objects to request their dependencies instead of creating them directly. Microsoft.Extensions.DependencyInjection allows you to define service registrations and resolve dependencies using a fluent and expressive

[HOW TO] Run A Geospatial Search With EF Core and Npgsql

5 years ago · 3 minutes read

Introduction

As a developer, progressing in your career means building more and more complex applications with more strenuous requirements. That may include applications that have geospatial requirements. A common scenario you may face is finding entities which fall within a given geographic boundary provided by Google Maps or any other mapping service.

For this guide, we'd be examining a hypothetical scenario where we have a bunch of restaurant entities that we need to display on a map. We'd be demonstrating this using C#, Entity Framework Core, Npgsql and Postgres.

Setup

For this, I'll assume that you have the .NET Core runtime installed on your local machine (if you don't, then this installation walkthrough should help), at some experience with C# and .NET Core as well as having an ASP.NET Core Project setup.

If you don't already have Entity Framework and Npgsql installed in your projects, then you'd need to run these commands to get the packages installed:

$ dotnet add package Microsoft.EntityFrameworkCore

$ dotnet add package Npgsql.EntityFrameworkCore.PostgreSQL

we also need to add support for geospatial types by adding this package as well:

$ dotnet add package Npgsql.EntityFrameworkCore.PostgreSQL.NetTopologySuite

For any project utilizing Entity Framework, we'd need a database context. That is a specialized class that details the collections that our database "understands" or has "context" on. I won't be going into a lot of detail about database contexts as I assume that you understand that fairly well already. So for this guide, we'd have a database context file called AppDbContext.cs and should contain:

using Microsoft.EntityFrameworkCore;

public class AppDbContext : DbContext

{

public DbSet<Restaurant> Restaurants { get; }

public AppDbContext(DbContextOptions<Restaurant> options): base(options)

{

}

// you'd also need the PostGIS extension added

protected override void OnModelCreating(ModelBuilder modelBuilder)

{

modelBuilder.HasPostgresExtension("postgis");

}

}

If you copied and pasted the code above into your text editor or IDE, you would most definitely have some red squiggly lines under the Restaurant references as that class does not exist yet. We'd be adding that as well. To our Restaurant.cs file, we add the following:

using NetTopologySuite.Geometries;

public class Restaurant

{

public int Id { get; set; }

public string Name { get; set; }

// to persist the geo location of each restaurant

public Point Location { get; set; }

}

You'd also need to ensure that the database connection configuration is setup to enable querying for and by geospatial info. So in your Startup.cs file, you need to have a line akin to:

...

public void ConfigureServices(IServiceCollection services)

{

...

services.AddDbContext<AppDbContext>(options =>

options.UseNpgsql("<your db connection string>", builder =>

{

builder.UseNetTopologySuite();

}));

...

}

Querying

Usually, when working with mapping services like Google Maps or MapBox or Bing Maps, the visible bounds of the map are provided as the northern latitude, the southern latitude, the east longitude and west longitude as decimal values. With those values given, we can query against our database to find out which of our restaurants fall within the visible map view.

To query, we need to construct coordinates of the four corners of the view as well as create a polygon defini9ng the area to search. With that, our search method would look something like this:

// populate this through DI via your constructor

private readonly AppDbContext _dbContext;

private readonly GeometryFactory _geometryFactory =

new(new PrecisionModel(), 4326); // 4326 represents WGS 84

...

public async Task<List<Restaurant>> GetRestaurantsInView(double north, double east, double south, double west)

{

var nw = new Coordinate(west, north);

var ne = new Coordinate(east, north);

var sw = new Coordinate(west, south);

var se = new Coordinate(east, south);

var polygon = new Polygon(new LinearRing(new[] {ne, nw, sw, se, ne}), Array.Empty<LinearRing>(),

_geometryFactory);

return _dbContext.Restaurant

.Where(x => x.Location.Within(polygon))

.ToListAsync();

}

If you wanted to exclude some interior section of the given area, then you'd need to replace Array.Empty<LinearRing>() with the dimensions of the interior sections.

Conclusion

That's about it. I created this guide because I could not find a simple walkthrough around querying against geospatial fields and the library documentation pages don't help much.

Cheers.

Using the Firebase realtime database with .NET

6 years ago · 4 minutes read

A Brief Aside

Over my over 6 years in the software development space and specifically in the .NET ecosystem, I have noticed a kind of animosity towards Microsoft and the .NET platform. Companies create services and develop libraries for just about every other platform but .NET. The excuse cannot be lack of demand as C# is the 7th most used language and ASP.NET the 4th most used web framework by the Stack Overflow developer survey. Despite my ire with the current situation, I do not think it is wholly undeserved as Microsoft has acted in a similar manner over the years, though the organization under Satya Nadella has changed its approach to collaboration. Hopefully, things improve.

Introduction

Firebase is a Backend-as-a-Service (BaaS) that started as a YC11 startup and grew up into a next-generation app-development platform on Google Cloud Platform. Arguably, the mostly widely used product in the Firebase suite is their real-time database. The Firebase Realtime Database is a cloud-hosted NoSQL database that lets you store and sync between your users in realtime. The Realtime Database is really just one big JSON object that the developers can manage in realtime.

Why Firebase?

For a mobile developer with little backend development skill or for a developer who is time-constrained in delivering a mobile product, Firebase takes away the need for building out a dedicated backend to power your mobile service. It handles authentication if you so desire and data persistence and for officially supported platforms, it even offers fail-safes for data if there is a network connectivity interruption. Sadly, .NET is not currently an officially supported platform. I remember seeing a petition or thread of some sort requesting official support from Google but can't seem to find it. Fortunately, we have a workaround. the fine folks over at step up labs wrote a wrapper around the Firebase REST API which gives us access to our data.

Installation

Now to the juicy bits, we need to install the libraries we need. To the shared .NET standard Xamarin project, run one of the following commands, depending on your preference:

Install-Package FirebaseDatabase.net

or

dotnet add package FirebaseDatabase.net

Handling Data

We need to create a model for the data we need to persist and modify. For that, we create a directory called Models. Next, we create a file Student.cs to hold our Student model as defined below:

public class Student

{

public string FullName {get; set;}

public string Age {get; set;}

}

The next step is CRUD (Create, Read, Update and Delete) for our data. In order to keep everything all tidy and such, we create a directory Services and a file StudentService.cs to hold our service logic. Remember, data in Firebase has to be stored as key-value pairs. To add support for persisting data to our service, we do the following:

using System;

using Firebase.Database;

using Firebase.Database.Query;

public class StudentService

{

private const string FirebaseDatabaseUrl = "https://XXXXXX.firebaseio.com/"; // XXXXXX should be replaced with your instance name

private readonly FirebaseClient firebaseClient;

public StudentService()

{

firebaseClient = new FirebaseClient(FirebaseDatabaseUrl);

}

public async Task AddStudent(Student student)

{

await firebaseClient

.Child("students")

.PostAsync(student);

}

}

To make use of our service, we add the following, it can be added to the code-behind files of views or in other services:

...

public StudentService service = new StudentService();

...

var student = new Student

{

FullName = "John Doe",

Age = "21 Years"

}

await service.AddStudent(student);

To retrieve the students we have saved to the database, we can add a new method to our StudentService class:

using System.Collections.Generic;

...

public class StudentService

{

...

public async Task<List<KeyValuePair<string, Student>>> GetStudents()

{

var students = await DatabaseClient

.Child("students")

.OnceAsync<Student>();

return students?

.Select(x => new KeyValuePair<string, Student>(x.Key, x.Object))

.ToList();

}

}

As you can observe with the data retrieval above, when we push new data to Firebase, a new Id is generated for the record and we can get that Id when we retrieve our data. The Id comes in useful when we need to update data we have on Firebase as shown below:

public class StudentService

{

...

public async Task UpdateStudent(string id, Student student)

{

var students = await DatabaseClient

.Child("students")

.Child(id)

.PutAsync(student);

}

}

Removing an entry is just as easy, we just need the id generated for the entry we need to remove. Update your StudentService class with a method to aid removal as shown below:

public class StudentService

{

...

public async Task RemoveStudent(string id)

{

var students = await DatabaseClient

.Child("students")

.Child(id)

.DeleteAsync();

}

}

Further Reading

The complete source for the samples shown can be found on GitHub. While I touched on the basics of accessing data from firebase, the FirebaseDatabase.net library offers support for more advanced data query options such as LimitToFirst and OrderByKey amongst others. It also offers data streaming similar to that of the official libraries with the System.Reactive.Linq namespace. You can find more in-depth documentation at the project GitHub page.

That's it for now,

Cheers.

[HOW TO] Generate .NET Core code coverage with Coverlet

6 years ago · 3 minutes read

Brief Intro

In the early days of .NET Core, there was a reliable, built-in testing system but no code coverage tool to gain insight into the scope of testing being done. While the full .NET framework was spoilt for choice when it came to the selection of code coverage tools, from OpenCover to dotCover, there was little to nothing available for the nascent .NET Core.

Doing some research in those early days, it didn't seem like any of the established coverage tool developers were in a rush to add .NET Core support and who could blame them? There was no evidence this was not going to end up going the way of Silverlight, so I understand why they hedged their bets.

Coverlet

tonerdo along with some of the awesome .NET community created an open-source code coverage tool called Coverlet. This tool integrates itself into the msbuild system, instruments code and cam generate coverage in a number of supported formats.

Installation

To set your project up for coverage, in your test project folders i.e for each test project, run the following in your terminal:

dotnet add package coverlet.msbuild

dotnet add package Microsoft.NET.Test.Sdk

With that, you are set to run tests and generate coverage.

Basic Usage

If you are looking to generate coverage data for a single test project or generate separate coverage files for multiple tests, then following simple call would suffice. It generates coverage in JSON format and outputs a coverage file.

dotnet test /p:CollectCoverage=true

If you want to specify the output format, then an additional flag needs to be added. The formats supported currently are json, lcov, opencover, cobertura and teamcity. For example, to generate coverage in the opencover format, run the following:

dotnet test /p:CollectCoverage=true /p:CoverletOutputFormat=opencover

To generate coverage in multiple formats, separate the required formats by a comma (,). For example, to generate both lcov and json formats, we run the following:

dotnet test /p:CollectCoverage=true /p:CoverletOutputFormat=lcov,json

Further flags and options and documentation can be found at the project wiki here.

Handling coverage for split test projects

There may be a situation where you'd want to generate a single coverage file from multiple test projects, for example, reporting the coverage of an entire project instead of the component bits, then it gets a bit verbose but coverlet still covers (terrible pun, I know) us. For example, if I have three test projects, descriptively named Test1, Test2 and Test3 and I want to generate a single coverage file in the opencover format, then I have to run the following in sequence:

dotnet test Test1/Test1.csproj /p:CollectCoverage=true /p:CoverletOutput="./results/"

dotnet test Test2/Test2.csproj /p:CollectCoverage=true /p:CoverletOutput="./results/" /p:MergeWith="./results/coverage.json"

dotnet test Test3/Test3.csproj /p:CollectCoverage=true /p:CoverletOutput="./results/" /p:MergeWith="./results/coverage.json" /p:CoverletOutputFormat="opencover"

To explain what the above process does. First, we have to generate the coverage in the json form an only convert to our desired format (in this case lcov when we get to the last test project. Second, we need to have a specified folder where all the test reports get dumped so they can be combined, in this case out output folder is ./results. And finally as earlier mentioned, we specify our desired output format on the last test run ad that does the combining.

Hope this helps. Cheers.

Create A URL Shortener With ASP.NET Core and MongoDB

6 years ago · 6 minutes read

What is a URL Shortener

A URL Shortener as Rebrandly would out it is "a simple tool that takes a long URL and turns it into whatever URL you would like it to be". And that is all there is to it. A URL Shortener takes a URL, usually, a long one and converts it into a shorter URL.

Why use a URL Shortener

Using a URL shortener comes with a number of advantages:

- In creating shorter URLs they are more easily remembered.

- The shorter URLs allow for links to be shared via social media platforms that have hard text limits such as Twitter.

- For a number of commercial shortening services, you can track clicks and view compiled data on each generated link.

- Shortened URLs usually just look "better" or more aesthetically pleasing if we want to get technical 😄

Scope of this article

The aim of this article is to demonstrate interfacing with MongoDB using the first party Mongo client library as well as optimizations we can add to boost the performance of our application.

Technologies

If the title of the article was not a dead giveaway, we would be employing the following technologies:

- .NET Core (Runtime)

- ASP.NET Core (High-performance web framework)

- MongoDB (Document oriented NoSQL database)

- Mongo Client (Official MongoDB client library for .NET)

- ShortId (Short URL-safe id generator for .NET)

Prerequisites

To adequately follow this guide, you would need two things installed and running on your local machine

- The .NET Core SDK, if you do not have it installed, you can follow the installation instructions here

- The MongoDB Database Server, if you do not have it installed either, you can get the community (free edition) from here

Setting up

First, we create our application folder by running

mkdir url-shortener

Next, we change directories by running

cd url-shortener

Next, we create a new ASP.NET Core project

dotnet new mvc

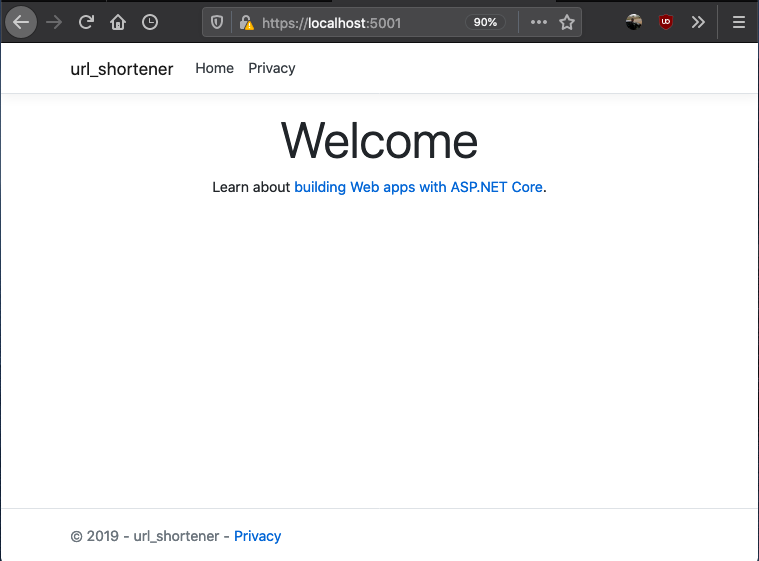

The line above creates a new MVC project. You can choose to use classic Razor pages or any other SPA framework offered by the CLI. To see the scaffolded app in action, you can run

dotnet run

if everything works as expected, your application should be running on http://localhost:5000 and if you visit the url you should see

Setting the server up

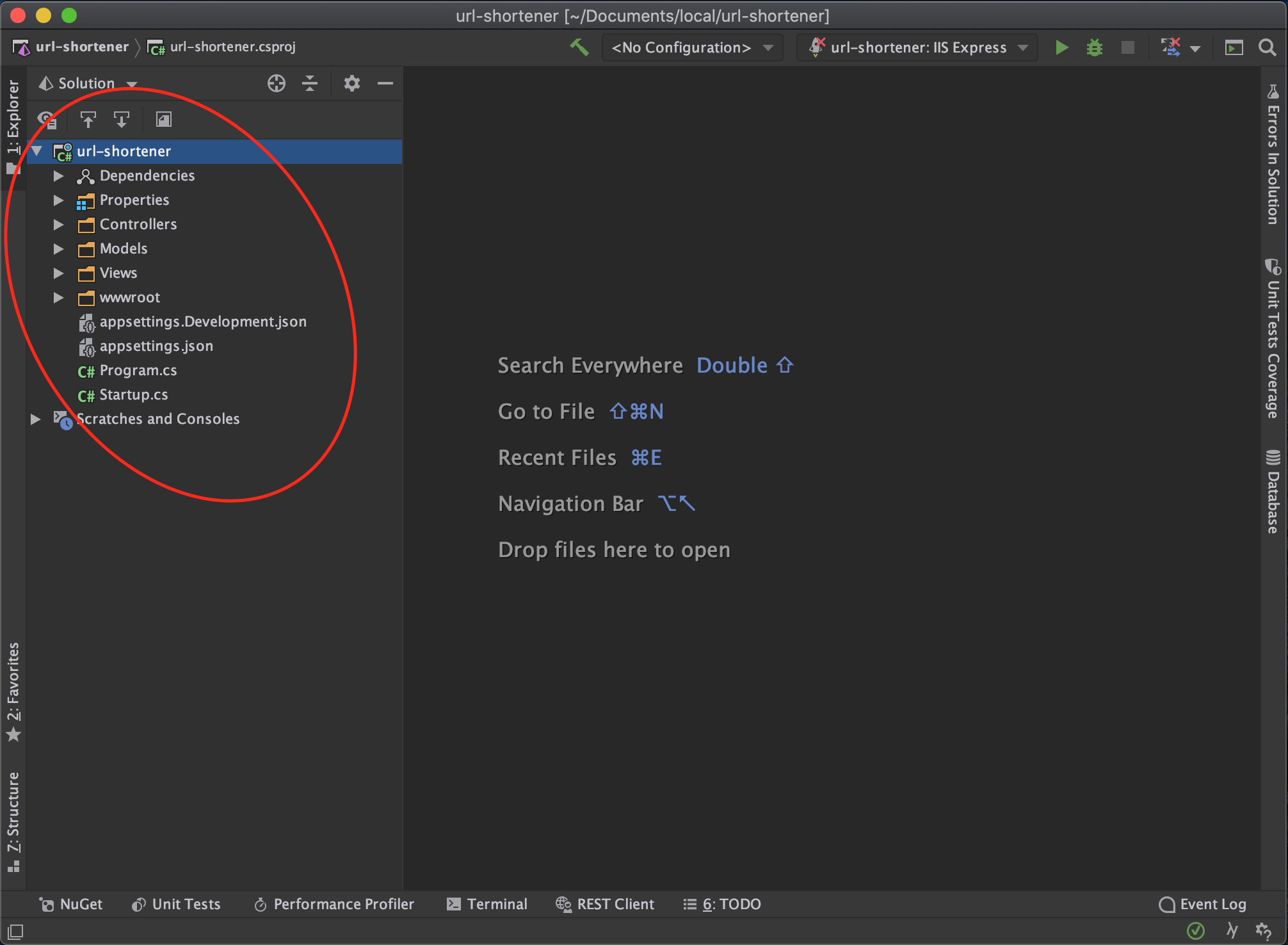

At this point, you can open the project up in your favourite code editor or Integrated Development Environment. For some, it is VSCode, Visual Studio, Sublime Text, or Atom (I don't judge). For me, the IDE of choice is JetBrains Rider.

You should have a folder structure similar to that shown below

Next, we install the packages we need to get our service up and running

dotnet add package MongoDb.Driver

dotnet add package shortid

Next, we want to create our data model. First off create a directory named Models and a class file named ShortenedUrl.cs and add the following details

using MongoDB.Bson;

using MongoDB.Bson.Serialization.Attributes;

public class ShortenedUrl

{

[BsonId]

public ObjectId Id { get; set; }

public string OriginalUrl { get; set; }

public string ShortCode { get; set; }

public string ShortUrl { get; set; }

public DateTime CreatedAt { get; set; }

}

Next, we set up Mongo database in our controller. In the HomeController add the following

using MongoDB.Driver;

...

public class HomeController: Controller

{

private readonly IMongoDatabase mongoDatabase;

private const string ServiceUrl = "http://localhost:5000";

public HomeController()

{

var connectionString = "mongodb://localhost:27017/";

var mongoClient = new MongoClient(connectionString);

mongoDatabase = mongoClient.GetDatabase("url-shortener");

}

}

In the case above, url-shortener is the database name given and it can be changed to anything else. The next step for us is to create a controller method that would take in the long url and generate a short URL. This particular method checks the database first and then if the url has not been shortened before then we shorten and generate a URL.

using MongoDB.Driver.Linq;

using shortid;

using url_shortener.Models;

...

public class HomeController : Controller

{

...

[HttpPost]

public async Task<IActionResult> ShortenUrl(string longUrl)

{

// get shortened url collection

var shortenedUrlCollection = _mongoDatabase.GetCollection<ShortenedUrl>("shortened-urls");

// first check if we have the url stored

var shortenedUrl = await shortenedUrlCollection

.AsQueryable()

.FirstOrDefaultAsync(x => x.OriginalUrl == longUrl);

// if the long url has not been shortened

if (shortenedUrl == null)

{

var shortCode = ShortId.Generate(length: 8);

shortenedUrl = new ShortenedUrl

{

CreatedAt = DateTime.UtcNow,

OriginalUrl = longUrl,

ShortCode = shortCode,

ShortUrl = $"{ServiceUrl}/{shortCode}"

};

// add to database

await shortenedUrlCollection.InsertOneAsync(shortenedUrl);

}

return View(shortenedUrl);

}

}

Next, we have to support redirecting to long URLs when the short URL link is entered into the address bar. And for that, we add an override to the default Index route that supports having a short code. The implementation for that controller endpoint is as follows

[HttpGet]

public async Task<IActionResult> Index(string u)

{

// get shortened url collection

var shortenedUrlCollection = _mongoDatabase.GetCollection<ShortenedUrl>("shortened-urls");

// first check if we have the short code

var shortenedUrl = await shortenedUrlCollection

.AsQueryable()

.FirstOrDefaultAsync(x => x.ShortCode == u);

// if the short code does not exist, send back to home page

if (shortenedUrl == null)

{

return View();

}

return Redirect(shortenedUrl.OriginalUrl);

}

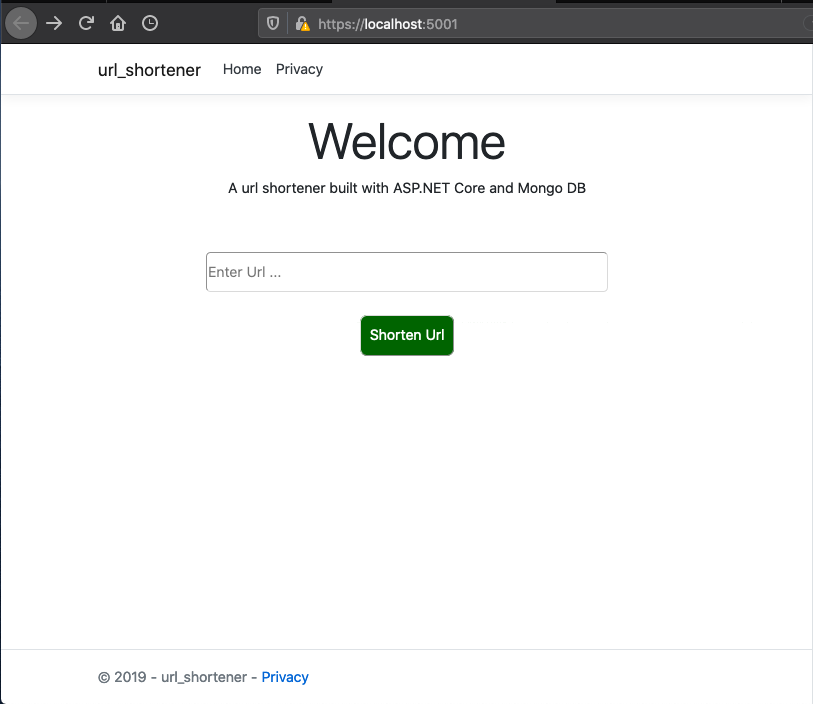

Setting Up The Client Side

To receive the long url, we need to add an input control and a button to send the data to the server-side from. The home page is implemented as follows

@{

ViewData["Title"] = "Home Page";

}

<div class="text-center">

<h1 class="display-4">Welcome</h1>

<p>A url shortener built with ASP.NET Core and Mongo DB</p>

</div>

<div style="width: 100%; margin-top: 60px;">

<div style="width: 65%; margin-left: auto; margin-right: auto;">

<form id="form" style="text-align: center;" asp-action="ShortenUrl" method="post">

<input

type="text"

placeholder="Enter Url ..."

style="width: 100%; border-radius: 5px; height: 45px;"

name="longUrl"/>

<button

style="background-color: darkgreen; color: white; padding: 10px; margin-top: 25px; border-radius: 8px;"

type="submit">

Shorten Url

</button>

</form>

</div>

</div>

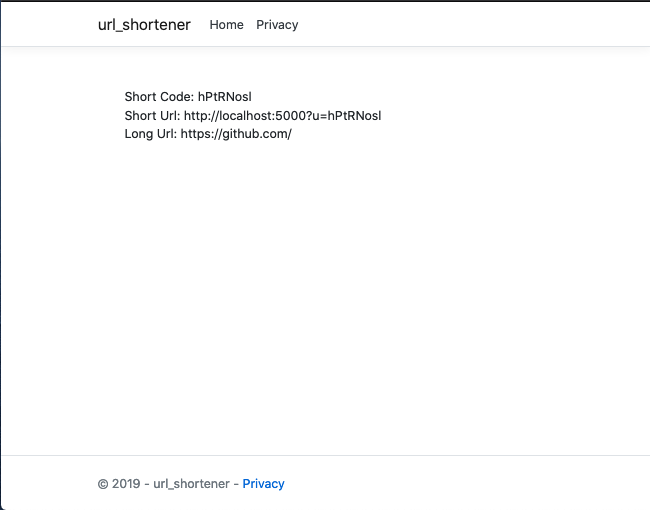

To show the generated URL, we need a new view named ShortenUrl.cshtml with the following content under the Views directory

@model ShortenedUrl;

@{

ViewData["Title"] = "Shortened Url";

}

<div style="width: 100%; padding: 30px;">

<div>

<div>Short Code: @Model.ShortCode</div>

<div>Short Url: @Model.ShortUrl</div>

<div>Long Url: @Model.OriginalUrl</div>

</div>

</div>

A sample URL generated response would look like

The entire source code for this article can be found here. In a follow-up article, we would benchmark the current implementation and take steps to improve performance.

Till the next one,

Adios